DJ Patil’s code of ethics for data science

2.5 quintillion bytes of data are created every day. It’s created by you when you’re commute to work or school, when you’re shopping, when you get a medical treatment, and even when you’re sleeping. It’s created by you, your neighbors, and everyone around you. So, how do we ensure it’s used ethically?

Back in 2014, before I entered public service, I wrote a post called Making the World Better One Scientist at a Time that discussed concerns I had at the time about data. What’s interesting, is how much of it is still relevant today. The biggest difference? The scale of data and coverage of data has massively increased since then and with it the opportunity to do both good and bad.

…

With the old adage that with great power comes great responsibility, it’s time for the data science community to take a leadership role in defining right from wrong. Much like the Hippocratic Oath defines Do No Harm for the medical profession, the data science community must have a set of principles to guide and hold each other accountable as data science professionals. To collectively understand the difference between helpful and harmful. To guide and push each other in putting responsible behaviors into practice. And to help empower the masses rather than to disenfranchise them. Data is such an incredible lever arm for change, we need to make sure that the change that is coming, is the one we all want to see.

So how do we do it? First, there is no single voice that determines these choices. This MUST be community effort. Data Science is a team sport and we’ve got to decide what kind of team we want to be.

Should data scientists adhere to a Hippocratic oath?

From Wired (February 8, 2018):

THE TECH INDUSTRY is having a moment of reflection. Even Mark Zuckerberg and Tim Cook are talking openly about the downsides of software and algorithms mediating our lives. And while calls for regulation have been met with increased lobbying to block or shape any rules, some people around the industry are entertaining forms of self regulation. One idea swirling around: Should the programmers and data scientists massaging our data sign a kind of digital Hippocratic oath?

Microsoft released a 151-page book last month on the effects of artificial intelligence on society that argued “it could make sense” to bind coders to a pledge like that taken by physicians to “first do no harm.” In San Francisco Tuesday, dozens of data scientists from tech companies, governments, and nonprofits gathered to start drafting an ethics code for their profession.

The general feeling at the gathering was that it’s about time that the people whose powers of statistical analysis target ads, advise on criminal sentencing, and accidentally enable Russian disinformation campaigns woke up to their power, and used it for the greater good.

“We have to empower the people working on technology to say ‘Hold on, this isn’t right,’” DJ Patil, chief data scientist for the United States under President Obama, told WIRED. (His former White House post is currently vacant.) Patil kicked off the event, called Data For Good Exchange. The attendee list included employees of Microsoft, Pinterest, and Google.

Schaun Wheeler’s take on codes of data ethics: Not just unimplementable, but built on the wrong foundation

I’m still making up my mind about Schaun Wheeler’s contrarian take on codes of data ethics, but that may be colored by my dislike of Joel Grus’ dickishly libertarian “fuck your ethics” stance. I’ve included Wheeler’s take, along with his interview on Joel Grus’ podcast, Adversarial Learning, for the sake of completeness, with the caveat that I’m undecided on it.

On the difficulty of creating a data science code of ethics (Hackernoon, February 2, 2018):

dj patil recently wrote about the need for a code of ethics for data science. It’s not clear to me that data science as a profession is ready for a code of ethics. Codes are just words unless there is a mechanism to enforce sanctions against people who disregard those codes, and I’m pretty sure no single data science community is cohesive enough to enforce rules even for its own members.

An ethical code can’t be about ethics (Towards Data Science, February 6, 2018):

Last week, I wrote about my skepticism of Data for Democracy’s intent to create a data science code of ethics. My concerns focused on the practical feasibility of the project. After a lot of talking about, reading, and watching the evolution of the D4D code of ethics, I still believe the proposed principles are largely unactionable. I also believe, now, that what the working groups have produced is built on the wrong foundation entirely. This isn’t about iterating forward to a solution. No amount of revision can succeed if you’re building the wrong thing.

We need to be clear on what a code of ethics means. If we can realistically expect everyone in the community to just adopt a code of ethics because they intuitively feel that it’s the good and right thing to do, then the code of ethics is unnecessary — it amounts to nothing more than virtue signaling. If we can’t realistically expect complete organic adoption, then the code is a mechanism to coerce those who disagree with it, to censure people who don’t abide by it. Those two routes — wholesale freewill adoption or coercion — are the only two ways a code of ethics can actually mean anything.

Ed. note: Wheeler uses the phrase “virtue signaling” in this essay, a phrase that I think of the real-world equivalent of “Hail Hydra”: as a way for villains identify themselves to their comrades.

Can we be honest about ethics? (Hackernoon, March 5, 2018):

Ethics is not a solvable problem but it is a manageable risk. No set of principles, not even a robust legal and regulatory infrastructure, will ensure ethical outcomes. Our goal should be to ensure that algorithm design decisions are made by competent, ethical individuals — preferably, by groups of such individuals. If we improve competency, we improve ethics. Most ethical mistakes come from the inability to foresee consequences, not the inability to tell right from wrong.

An effective ethical code doesn’t need to — in fact, probably shouldn’t — focus on ethical issues. What matters most are the consequences, not the tools we use to bring those consequences about. As long as an ethical code stipulates ways individual practitioners can prove their competence by voluntarily taking on “unnecessary” costs and risks, it will weed out the less competent and the less ethical. That’s the list we should be building. That’s the product that will result in a more ethical profession.

My code of ethics will forbid YAML (Adversarial Learning podcast, May 25, 2018):

Joel Grus’ and Andrew K. Musselman’s podcast, Adversarial Learning, have Schaun Wheeler as a guest to talk about his stance on the proposed code of ethics. When listening to this episode, I couldn’t shake the feeling that I was listening to three smug white guys with (sometimes literally) no skin in the game.

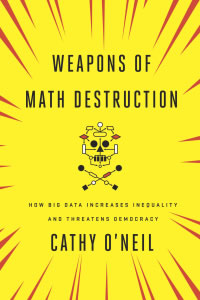

Weapons of Math Destruction, by Cathy O’Neil

Worthwhile reading, or listening (the author reads the audiobook herself). I enjoyed it!

A former Wall Street quant sounds an alarm on the mathematical models that pervade modern life — and threaten to rip apart our social fabric

We live in the age of the algorithm. Increasingly, the decisions that affect our lives—where we go to school, whether we get a car loan, how much we pay for health insurance—are being made not by humans, but by mathematical models. In theory, this should lead to greater fairness: Everyone is judged according to the same rules, and bias is eliminated.

But as Cathy O’Neil reveals in this urgent and necessary book, the opposite is true. The models being used today are opaque, unregulated, and uncontestable, even when they’re wrong. Most troubling, they reinforce discrimination: If a poor student can’t get a loan because a lending model deems him too risky (by virtue of his zip code), he’s then cut off from the kind of education that could pull him out of poverty, and a vicious spiral ensues. Models are propping up the lucky and punishing the downtrodden, creating a “toxic cocktail for democracy.” Welcome to the dark side of Big Data.

deon: An ethics checklist for data scientists

From the deon site:

deonis a command line tool that allows you to easily add an ethics checklist to your data science projects. We support creating a new, standalone checklist file or appending a checklist to an existing analysis in many common formats.

δέον • (déon) [n.] (Ancient Greek) wikitionary

Duty; that which is binding, needful, right, proper.

The conversation about ethics in data science, machine learning, and AI is increasingly important. The goal of

deonis to push that conversation forward and provide concrete, actionable reminders to the developers that have influence over how data science gets done.

Here are the first two sections of the default checklist that deon generates:

Data Science Ethics Checklist

A. Data Collection

- A.1 Informed consent: If there are human subjects, have they given informed consent, where subjects affirmatively opt-in and have a clear understanding of the data uses to which they consent?

- A.2 Collection bias: Have we considered sources of bias that could be introduced during data collection and survey design and taken steps to mitigate those?

- A.3 Limit PII exposure: Have we considered ways to minimize exposure of personally identifiable information (PII) for example through anonymization or not collecting information that isn’t relevant for analysis?

B. Data Storage

-

B.1 Data security: Do we have a plan to protect and secure data (e.g., encryption at rest and in transit, access controls on internal users and third parties, access logs, and up-to-date software)?

-

B.2 Right to be forgotten: Do we have a mechanism through which an individual can request their personal information be removed?

-

B.3 Data retention plan: Is there a schedule or plan to delete the data after it is no longer needed?